Every modern business aims to be data-driven today.

But most businesses fail to build the right team that can make it happen.

To help you avoid the same trap, I have created this guide. It will help you create the right data engineering team for your company.

With this guide, you will learn answers to important questions. Questions like “What does a data engineer do?.

Moreover, you will also learn how to implement key data engineering best practices.

Let’s get started.

What is Data Engineering?

Data engineering is the process of collecting and preparing data for analysis.

With data engineers, you can build the first step towards gaining insights from your data.

After data engineers prepare the data, data analysts can derive the right analytics.

Data engineers are also responsible for creating the right data pipeline architecture. This is what moves your data from its source to the destination.

Thus, data engineers are responsible for:

- Collecting your data

- Cleaning and preparing your data

- Migrating your data for analysis

What Does a Data Engineer Do?

Here are the main steps data engineers perform:

| Task | What It Means |

| Data ingestion | Pulling data from databases into one place |

| Data transformation | Cleaning and formatting data for analysis |

| Pipeline building | Creating automated systems to move data |

| Data quality | Checking that data is accurate and complete |

Data Engineering Team Structure

Wondering how you can structure your data engineering team properly?

Here is a simple guide to do so:

| Team Size | Roles to Hire |

| Small (1-2 people) | One data engineer who builds basic pipelines |

| Growing (3-5 people) | Add senior data engineer + analytics engineer |

| Enterprise (6+ people) | Specialized roles + data architect |

Small Business (1 – 2 People)

If you are a startup or emerging business, consider hiring only one data engineer.

They can handle your initial data collection and analytics.

Make sure to use tools like Airbyte or Fivetran to maintain your pipeline.

Growing Team (3 – 5 People)

To scale your business, consider adding a senior data engineer. They can help you design a robust data architecture.

Moreover, hiring an analytics engineer can help manage your data quality. They can also help you in understanding Power BI dataflows and other important platforms.

Enterprise (6+ People)

Now it’s time to build specialized roles. This includes pipeline engineers and platform engineers.

Moreover, expand your analytics engineering team to keep up.

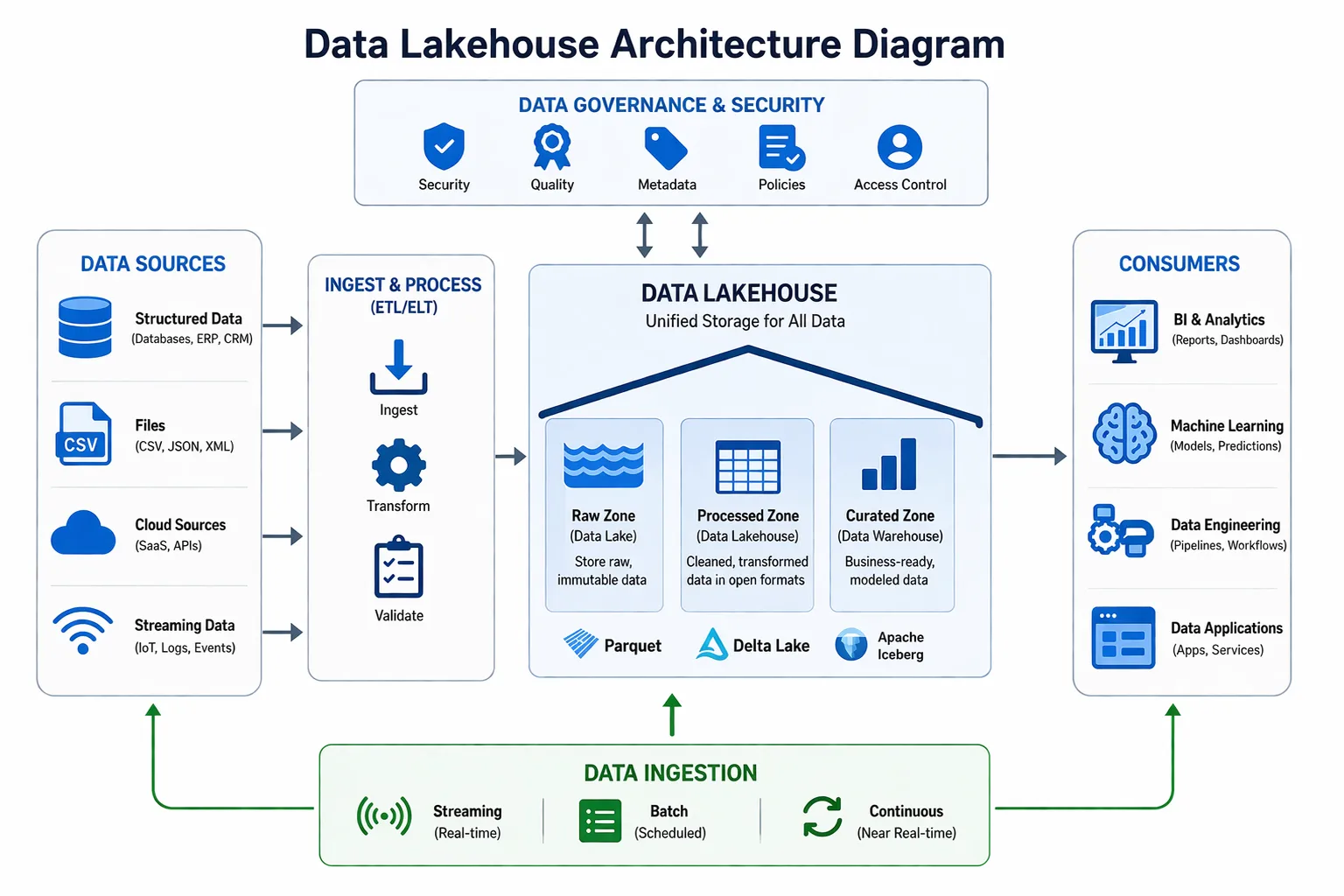

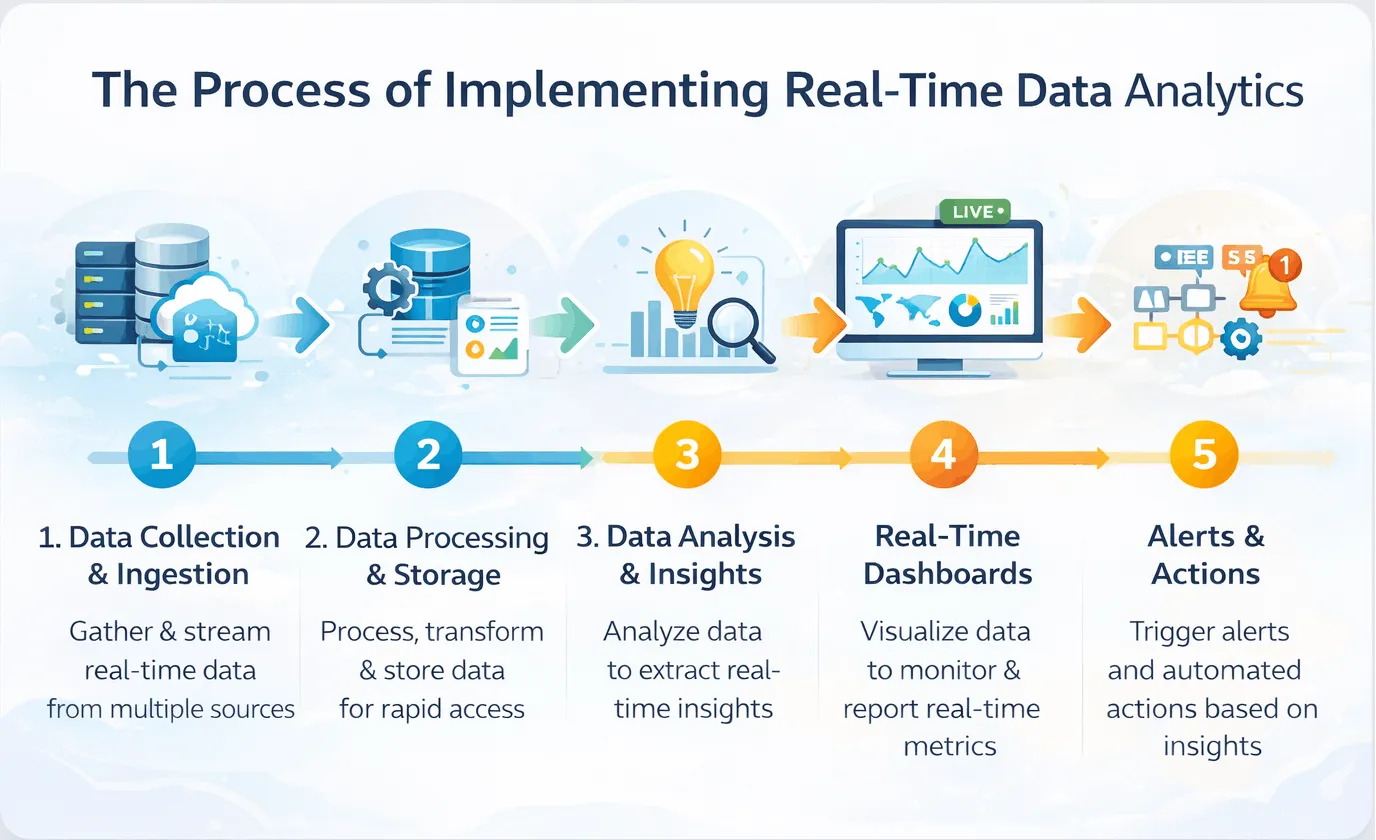

Data Pipeline Architecture

Your data pipeline architecture guides how your data moves through systems.

A typical modern pipeline follows this medallion structure:

| Layer | What It Contains | Purpose |

| Bronze | Raw data as received | Immutable source of truth |

| Silver | Cleaned and validated data | Trusted for analysis |

| Gold | Aggregated, business-ready data | Dashboards and reporting |

Data Engineering Best Practices

Here are the most essential data engineering best practices:

-

Always Be Ready to Rebuild

As technology progresses, you need to adapt as well.

Make sure you can rebuild your entire data warehouse from your source data.

This ensures you have a recovery path in case of issues.

-

Test Everything

Make it a habit to test your data at every stage.

This includes validating your data and transformational logic.

Moreover, perform final checks on data outputs.

-

Recheck Your Pipeline Effeciency

Running your data pipelines twice should render the same result.

Make sure your pipeline is accurate and responsive.

-

Document your Data

Proper documentation of your data is very important.

It enables better scheduling and refined data pipelines.

-

Monitor Continuously

Set up alerts for any pipeline failures or data issues.

This will ensure you can fix your problems before they affect your users.

Data Engineering Services: Build or Outsource?

Considering whether you should hire or outsource your data engineers?

Here is what I recommend:

| Situation | Recommendation |

| You have 0-1 data people | Outsource to get started faster |

| Data is core to your product | Hire in-house engineers |

| You have a one-time migration | Outsource the project |

| You’re a startup with funding | Hire a senior engineer first |

Conclusion

Building the right data engineering team cannot happen overnight.

It is a slow process that takes time to build the right data foundation.

Make sure that you follow all data engineering best practices from day one. Moreover, regular testing and quality checks are always beneficial.

Also, your data engineering team structure needs to scale with your needs.

Still unsure where to start with your data engineering needs?

Consider partnering with Augmented Systems’ data engineering services. Our experts provide the best way to build your data pipeline’s initial stages.

Whether it’s data engineering, data analytics services, or architecture, we can help. Our experts have years of experience in delivering reliable data insights.

Contact Augmented Systems today to receive a free consultation for your data engineering needs.

FAQs

1. What is data engineering?

Data engineering is the practice of building systems that collect, store, and prepare data for analysis. It’s the foundation that enables data scientists and analysts to do their jobs effectively.

2. What does a data engineer do?

So, what does a data engineer do? They build data pipelines, clean and transform data, ensure data quality, and create automated systems that move data from sources to destinations, such as data warehouses.

3. What is a good data engineering team structure?

A data engineering team structure starts with one data engineer for small teams, adds a senior engineer and an analytics engineer for growing teams, and includes specialized roles like a data architect for enterprise-scale teams.

4. What are key data engineering best practices?

Data engineering best practices include building idempotent pipelines (that produce the same results every time), testing everything, documenting as you build, monitoring continuously, and always being able to rebuild from raw data.

5. What is data pipeline architecture?

Data pipeline architecture is the blueprint for how data moves through your systems. A modern approach uses a medallion structure with bronze (raw), silver (cleaned), and gold (business-ready) layers.