If you think your data is confusing, wait until you try to decide on the right data platform.

Today, you have two main choices you can make for accessing your data:

- Data lakehouse

- Data warehouse

Each of these platforms has its own benefits and drawbacks.

In this guide, I will help you understand each of them.

We will break down and understand data lakehouse vs data warehouses.

Let’s dive in by first describing each method:

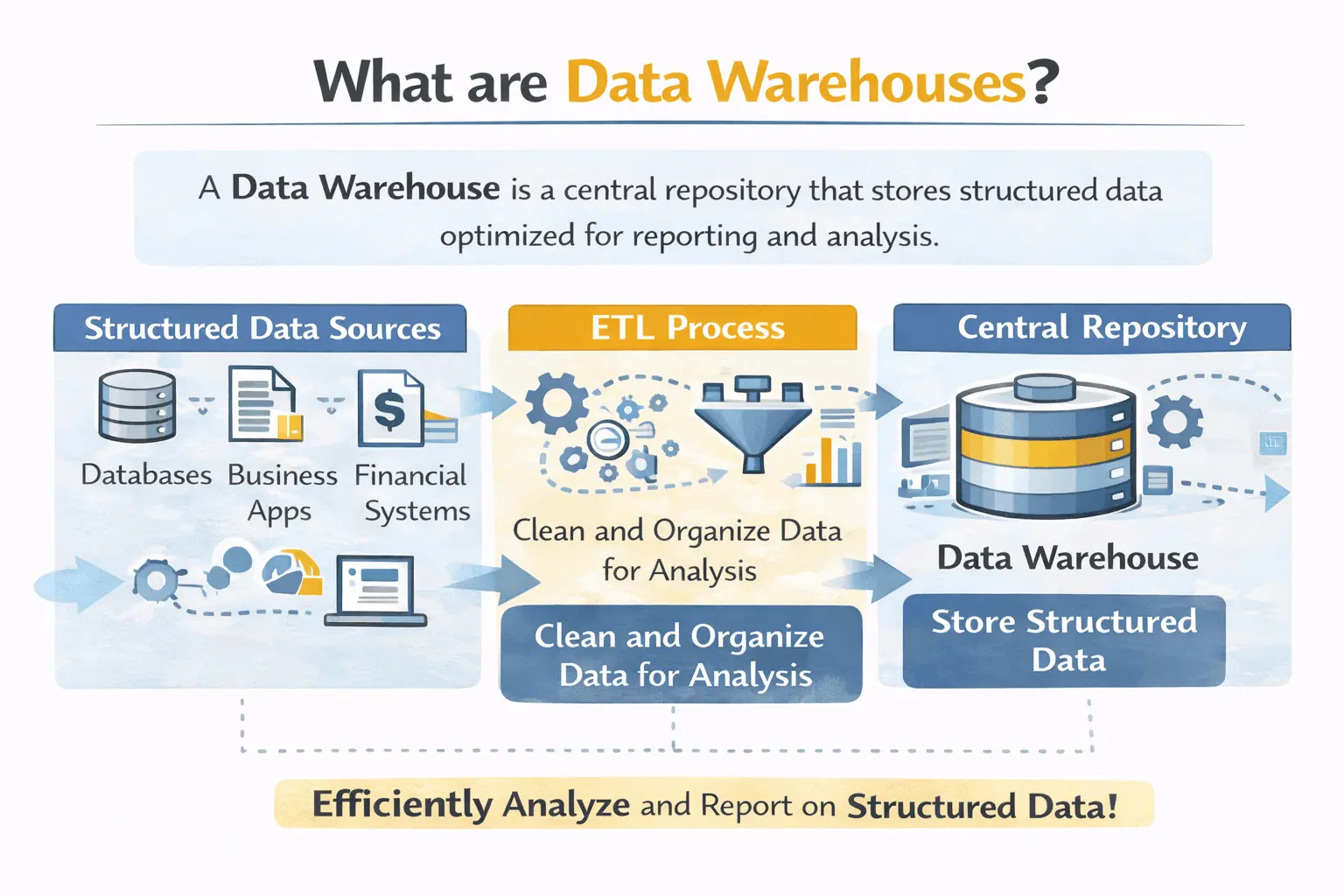

What is a Data Warehouse?

Let’s start our comparison with what data lake vs data warehouse entail.

In easy terms, a data warehouse is a highly organized library of data. Here, every data point has its own place and label.

These data warehouses help store structured data. This is data that is already cleaned and organized.

Examples of such data include customer records and your financial records.

Key Characteristics:

- Data is already cleaned before it is entered

- It follows a schema-on-write, which requires a predefined structure

- Such data is available quickly for generic queries

- Usually, data warehouses are quite expensive

The main drawback of this method is that it cannot support unstructured data.

Thus, you cannot properly store images or videos directly into such data warehouses.

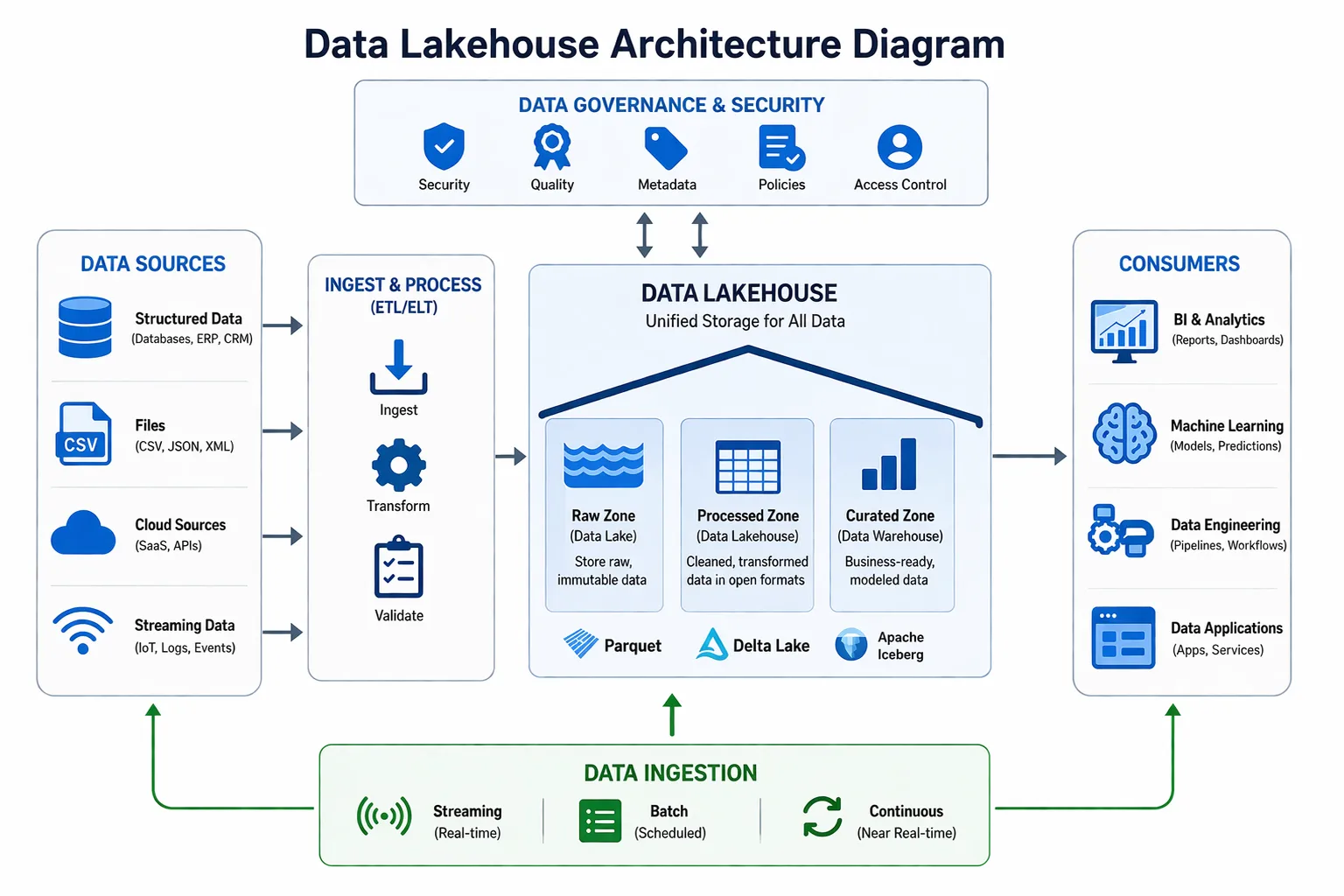

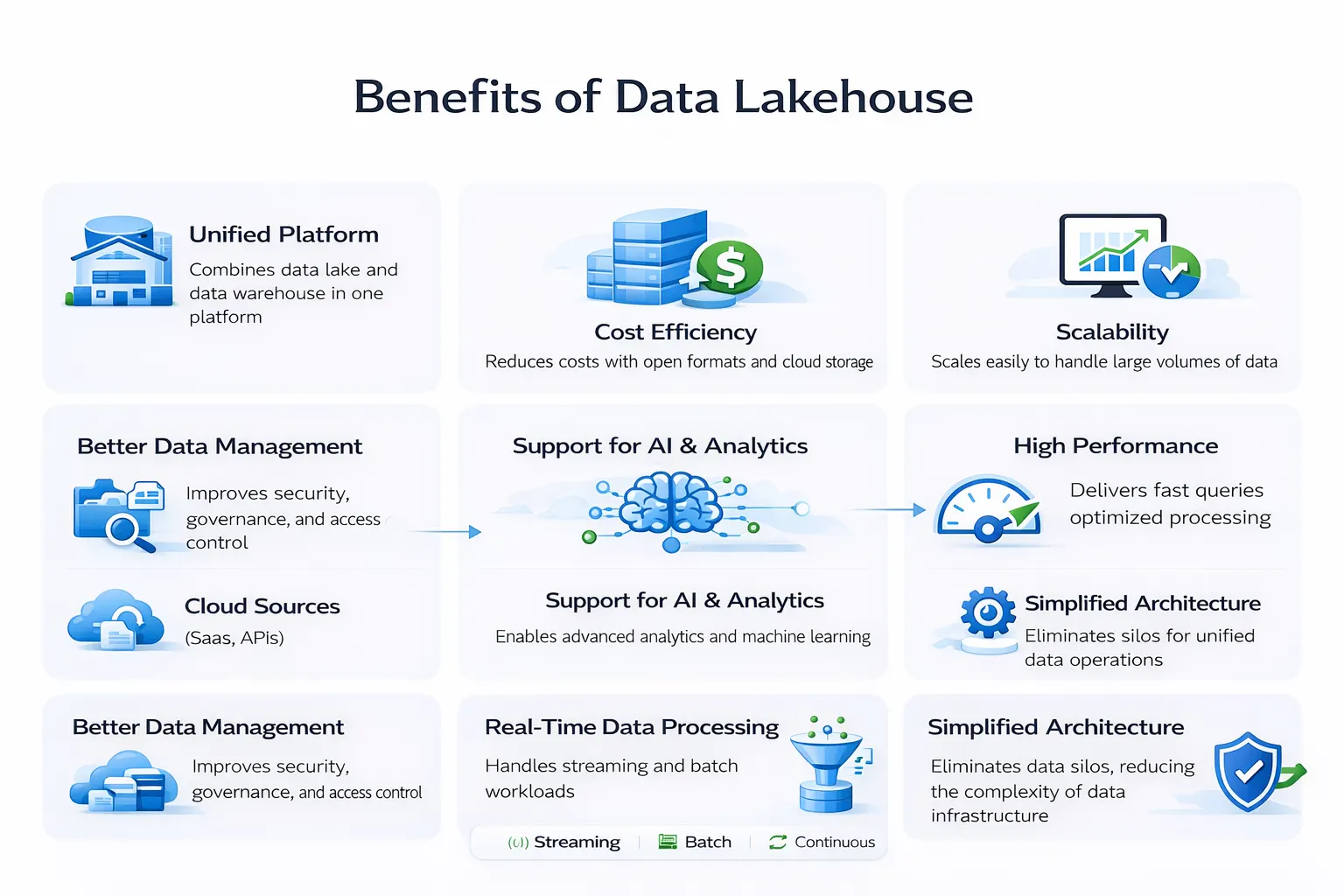

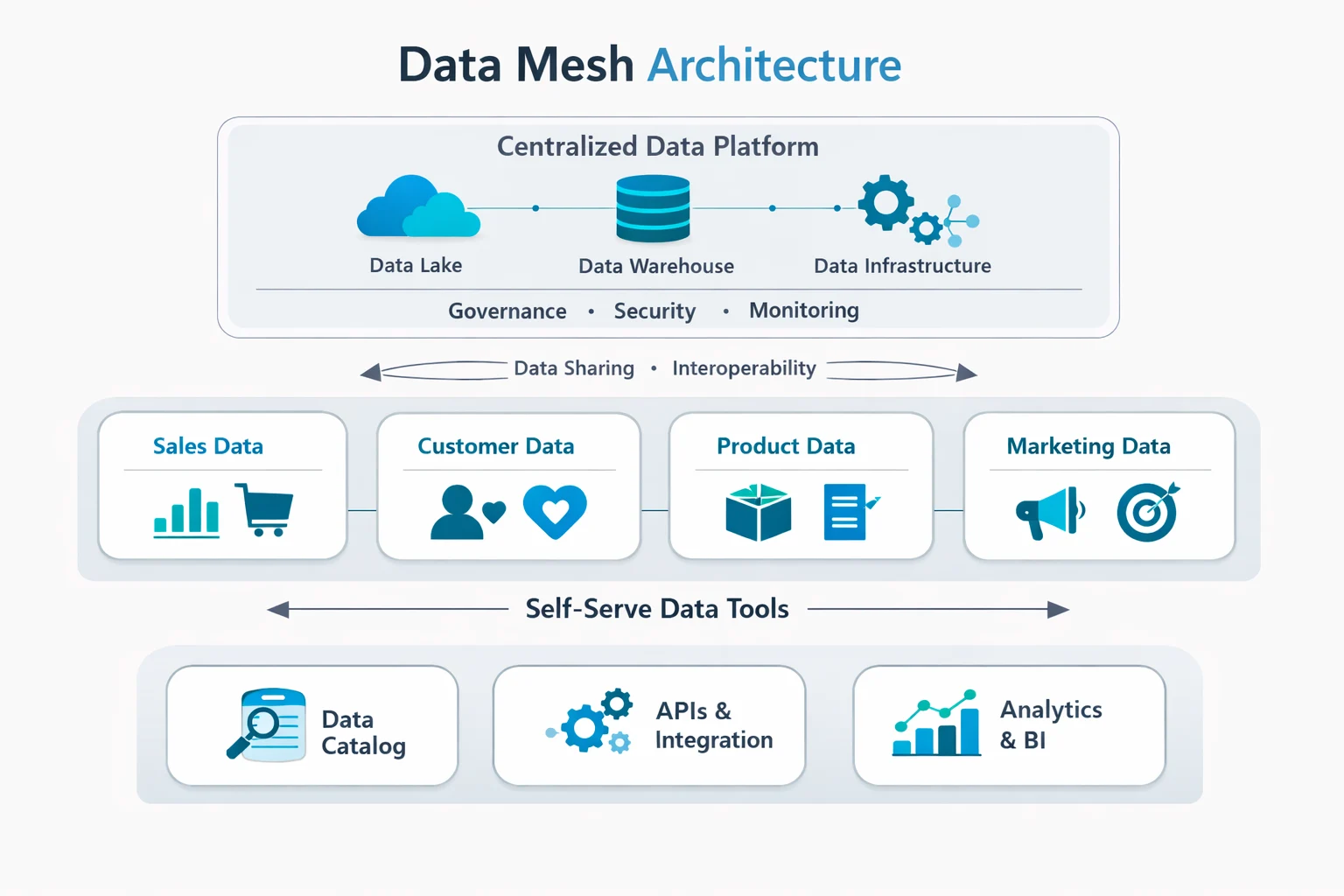

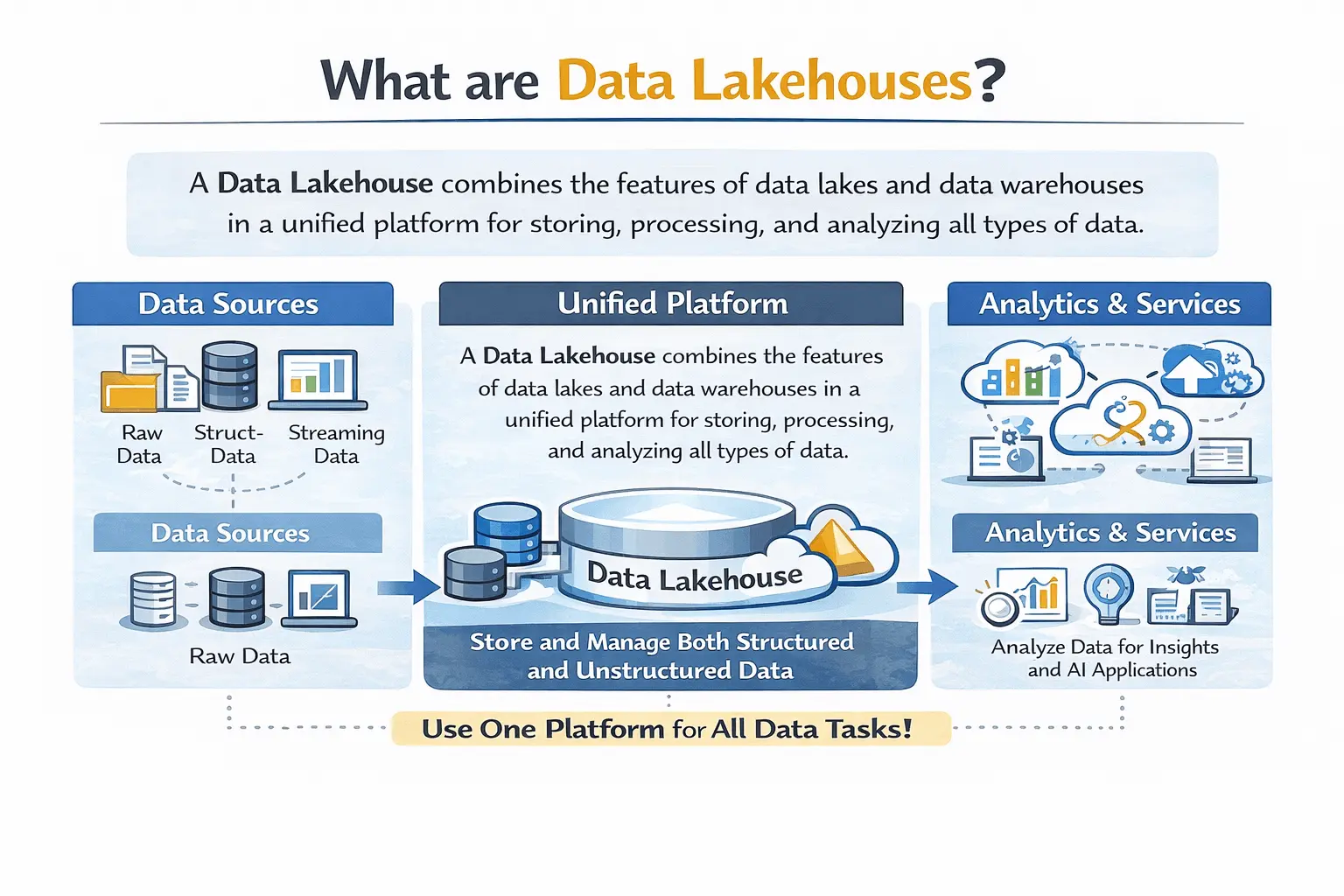

What is a Data Lakehouse?

So, what is a data lakehouse?

It is basically the combination of a data lake and a data warehouse. It uses data lakes to store unstructured data, but solves queries like a warehouse.

In simple terms, it provides the benefits of both a data warehouse and a lake.

Think of it like a library that stores both organized and messy books.

Key Characteristics:

- Store both structured and unstructured data

- Follows schema-on-read, applying structure as you input data

- Showcases create query performance

- Cheaper than using a data lake and a warehouse

Quick Comparison Between Data Lakehouse vs Data Warehouse

Here is a short comparison between these two data platforms:

| Feature | Data Warehouse | Data Lakehouse |

| Data types | Structured only | All types (text, images, JSON) |

| Schema approach | Schema-on-write | Schema-on-read |

| Storage cost | Expensive | Cheap |

| Query speed | Very fast | Fast (warehouse-like) |

| Data quality | High (cleaned before entry) | Flexible (clean when needed) |

| Best for | Business reporting, BI dashboards | Data science, AI, real-time analytics |

Performance Comparison

You might be wondering about the differences between a data lakehouse and a data warehouse in terms of performance.

In reality, they are quite similar. The performance thus entirely depends on how you use them.

In data warehouses, standard SQL queries are executed quickly. It excels at:

- Monthly sales reports

- Business financial statements

- Advanced dashboards with predictable queries

In comparison, using data lakehouses is even more advanced.

It can match warehouse performance levels. On top of it, it can also handle:

- Complicated data science data

- Model training for machine learning

- Access to real-time streaming data

- Petabytes of big data processing

Cost Comparison of Data Lakehouse vs Data Warehouse

Here are the differences between data lakehouse and data warehouse in terms of costs:

| Cost Factor | Data Warehouse | Data Lakehouse |

| Storage | Expensive proprietary formats | Cheap object storage (S3, ADLS) |

| Compute | Pay for usage | Pay for usage |

| Data duplication | High (copies for different uses) | Low (single copy of truth) |

| Total cost | Higher | 50-80% lower |

Scalability Comparison of Data Lakehouse vs Data Warehouse

Here is how these two data platforms compare against each other:

| Scalability Factor | Data Warehouse | Data Lakehouse |

| Storage scaling | Limited by proprietary systems | Virtually unlimited (cloud object storage) |

| Compute scaling | Can scale up/down | Can scale independently from storage |

| Data volume | Handles terabytes to petabytes | Handles petabytes to exabytes |

| User growth | Can hit limits | Scales with cloud providers |

Data Lakehouse Use Cases

There are many data lakehouse use cases your business can benefit from.

Some of these include:

| Use Case | Why Lakehouse Works |

| Real-time analytics | Handles streaming data natively |

| Data science & AI | Stores raw data for ML models |

| BI reporting | Fast enough for dashboards |

| Data sharing | Single source of truth across teams |

| Historical analysis | Cheap storage for years of data |

When to Choose Either Option

Still confused about which platform you should use in your data migration framework?

Here are my recommendations.

Choose a Data Warehouse for:

- Storing clean or structured data

- Usage for basic business reporting

- Reduced data workload in terabytes, not petabytes

Choose a Data Lakehouse for:

- Storing both structured and unstructured data

- Running Power BI and data science on your data

- Avoiding expensive data duplication or issues

- Storing data sourced in real-time from livestreams

- Scaling your data needs easily

Conclusion

When comparing data lakehouses vs. data warehouses, the choice is clear.

If you just need basic storage for your structured data, data warehouses are sufficient.

But if you need reliable access and modern abilities, data lakehouses are far better.

Using a data lakehouse negates the limitations of a data warehouse. These platforms can convert your unstructured data to support quick-access queries.

Need assistance in implementing data lakehouses in your current business?

Do not worry! Our team of experts at Augmented Systems can help set it up!

Augmented Systems has been known for decades as the leading software consultant for global businesses.

Whether it’s data warehouses or lakehouses, we have got you covered! Our experts can even opt for a hybrid structure if needed.

So, are you ready to switch to a modern way to store your data?

Simply contact Augmented Systems today to receive a free consultation.

FAQs

1. What is the main difference between a data lakehouse and a data warehouse?

The main difference between a data lakehouse and a data warehouse is flexibility. Data warehouses only store structured, cleaned data. Data lakehouses store all data types, including structured, semi-structured, and unstructured. It can do it in one place, at much lower cost.

2. What is a data lakehouse in simple terms?

What is a data lakehouse? It’s a modern data platform that combines cheap storage (like a data lake) with fast queries (like a data warehouse). You get the best of both worlds without managing two separate systems.

3. What is a data lake vs. a data warehouse?

What is a data lake vs. a data warehouse? A data lake stores raw data cheaply but can be slow to query. A data warehouse stores cleaned data for fast reporting, but it is expensive to maintain. A lakehouse gives you both benefits in one platform.

4. What are common data lakehouse use cases?

Data lakehouse use cases include real-time analytics, data science and AI model training, business intelligence dashboards, cross-team data sharing, and long-term historical analysis at a petabyte scale.

5. What are the key data warehouse limitations?

Data warehouse limitations include high storage costs, inability to handle unstructured data (e.g., images or JSON), rigid schemas that are hard to change, and the expense of duplicating data across different use cases.